What’s Under the Hood

The OpenCV library is built with the LPBH or Local Pattern Binary Histogram facial recognition that can be utilized to identify the face that is presented by the user. It is important that the user recognize the difference between facial detection and facial recognition.

The act of facial detection simply relays to the user if there is a face in the frame and most often there is a box that is place around what is known as the ROI or region of interest. On the other hand, the facial recognition that I will be discussing is the task of identifying who the face that is being shown in the frame belongs to with respect to a pre-loaded training set. LBPH facial recognition uses a dataset of pictures that the user provides to train the system prior to prediction. The actual back-end of the program accesses each picture to perform a comparison of the frame and the pre-trained dataset.

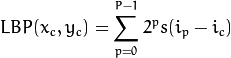

The LBPH approach takes a more 2-D stance on recognition whereas similar programs such eigenfaces or eigenvectors employ a high-deimensional vector leaving room for error. On the other hand LBPH summarizes the local structure of the image using a center pixel as reference and comparing the eight neighboring pixels. As openCV puts it if the center pixel is >= to neighboring pixels then those pixels are labeled with a one, otherwise use a zero. The picture below demonstrates what that looks like graphically. The equation for the computation is

with  as central pixel with intensity

as central pixel with intensity  ; and

; and  being the intensity of the the neighbor pixel.

being the intensity of the the neighbor pixel.  is the sign function defined as:

is the sign function defined as:

After the use of this the creators figured out that a fixed neighbor hood as I described above was not performing under scale differentiation. This problem was fixed with the use of a variable radius that altered the number of sample points for the neighborhood.

An example of the Local Binary Pattern that is created using the image can be seen below.

Later this information is used to construct numerous histograms that form a feature vector for the prediction.

Our Implementation

Using OpenCV and python we can create a simple script to perform a lightweight facial recognition with a minimal amount of steps.

- Load the images that will be Used as Training Set

- Run them Through an openCV facial “Detection” Algorithm

- Create your LBPHFaceRecognizer

- Train with cropped images an image labels

- Run live opencv videoCapture

- Use frames to crop live faces with facial “Detection” Algorithm

- Using the predict function make a call to the Recognizer with image from 6

- Print the name of the prediction for user

A sample of training can be seen below, with face_detect being a script I created for ROI cropping.

def main():

filename = os.getcwd()+'/facepics'

aurash0=face_detect(cv2.imread(filename+"/aurian.jpg",1))

aurash1=face_detect(cv2.imread(filename+"/aurian.jpg",1))

emma0=face_detect(cv2.imread(filename+"/emma.jpg",1))

emma1=face_detect(cv2.imread(filename+"/emma3.jpg",1))

recognizer=cv2.createLBPHFaceRecognizer()

trainingImages = [aurash0, aurash1, emma0, emma1]

trainingLabels = numpy.array([1, 1, 2, 2])

recognizer.train(trainingImages, trainingLabels)

livedetect(recognizer)

The call to live detect performs the actions mentioned in the steps.